LLM brand mentions are becoming one of the most important signals of visibility in AI-driven search. Your website may rank on page one of Google, your content may be strong, and you may still not appear in AI-generated answers. So why doesn’t a language model mention your brand?

That’s not an SEO problem. It’s an AI problem.

LLMs don’t index websites and rank them. They don’t care about your backlinks or domain authority in the way search engines do. When someone asks an LLM a question, it isn’t retrieving your carefully optimized page from a ranked list. It generates an answer based on patterns it learned during training, potentially combined with real-time retrieved content if the system uses retrieval-augmented generation (RAG).

Brand visibility is shifting. It’s no longer just about showing up in search results. It’s about being woven into the answers that AI systems generate when people ask questions. That’s a fundamentally different mechanism, and most businesses haven’t adjusted to it yet.

This post isn’t a checklist of SEO tactics rebranded as “AI optimization.” Instead, it explains the actual mechanics of how LLM brand mentions work, why most brands never appear, and what you can realistically do about it.

What Most People Get Wrong About AI Mentions

Before diving into how LLMs mention brands, let’s clear away the misconceptions that are actively misleading people.

Misconception 1: “If you rank high in Google, you’ll appear in LLM answers.”

Search rankings and LLM mentions operate on different systems. Google ranks pages based on links, relevance signals, and user behavior. LLMs generate answers based on training data patterns and, in some cases, retrieved content. A brand that ranks #1 for a keyword might never appear in an LLM’s response about that topic. Conversely, a brand with weak SEO might be mentioned frequently by an LLM if it has a strong presence in training data or retrieved documents.

Misconception 2: “LLMs are just summarizing or rephrasing top-ranking pages.”

Some LLMs do use retrieval-augmented generation, which pulls in recent information. But even then, which sources get retrieved depends on multiple factors beyond ranking position. The system might weight source authority, topical relevance to the query’s intent, content freshness, and several other signals. A brand mentioned in 50 different contexts across the web has a higher chance of appearing than a brand with one strong, high-ranking page.

Misconception 3: “You can directly optimize for LLM mentions like you optimize for SEO.”

You cannot. LLM outputs are probabilistic. The same question, asked twice in a row, might produce slightly different answers. A brand that appears in one response might not in another. There’s no “position 1” in LLM answers. There’s only “this brand was included in this particular generation event.”

This probabilistic nature is crucial to understand. It means control is limited. What you can do is influence the probability that your brand gets mentioned. You cannot guarantee it.

What Is a Brand Mention in LLMs

When we talk about LLM brand mentions, we need to be precise about what we’re counting.

A brand mentioned in an LLM response is when a language model includes your brand name or product name when generating an answer to a user query. This can happen in several contexts:

- Direct recommendations: “If you’re looking for project management software, consider Asana or Monday.com.”

- Comparisons: “Unlike Slack, Discord is designed primarily for gaming communities.”

- Examples within explanations: “Tools like Figma have democratized design software.”

- Contextual references: “The machine learning field advanced significantly after the release of tools like TensorFlow.”

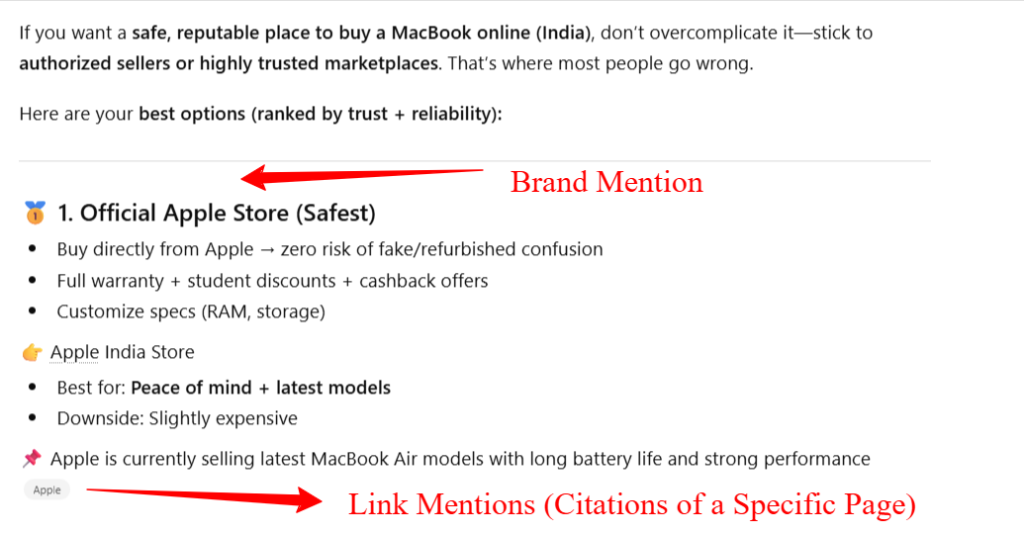

This is different from a citation, which is a formal attribution of information. Many LLMs include citations when they retrieve content from specific sources. A mention doesn’t require a citation. A brand can be mentioned without a link or source attribution.

Mentions also exist on a spectrum. A passing reference to a brand is a mention. So is an in-depth discussion of it. Both matter, though for different reasons.

AI Mentions vs AI Citations (Add A Image Of Mention and Citations)

These terms often get confused, so let’s separate them.

An AI mention is when an LLM includes your brand name in its response because it recognizes it as relevant to the topic or question. The mention exists in the generated text. It might appear with or without a link to your site.

An AI citation is when an LLM explicitly attributes information to a source. This is common in RAG systems. When an LLM says “According to [source]” or provides a hyperlink to a page, that’s a citation. Citations create direct traffic. They also signal that the LLM treated your content as authoritative enough to reference.

A summary is different again. It’s when an LLM condenses information from one or more sources into a shorter form. You might be cited in a summary, mentioned without attribution, or not appear at all even if your content was part of the training or retrieval pool.

Why does this distinction matter? Citations drive traffic. They’re also becoming the standard format for AI-generated answers, especially in systems like Google’s AI Overviews. Mentions influence perception. If users see your brand mentioned repeatedly across multiple LLM conversations, they start to recognize it as a category leader. But mentions alone don’t create traffic.

For business strategy, citations are more immediately valuable. For brand authority, consistent mentions across different contexts matter more.

How LLMs Decide to Mention a Brand

This is where the real mechanism lives. LLMs don’t have a deliberate decision-making process like a human would. But their architecture and training create patterns that determine which brands surface.

1: Training Data Patterns

LLMs are trained on massive amounts of text data. During training, the model learns statistical relationships between words, concepts, and entities. If a brand name appears frequently alongside certain topics, the model learns that association.

For example, if an LLM’s training data included thousands of articles about project management, and “Asana” appeared in hundreds of those articles while discussing task management, the model develops a strong association between “Asana” and “project management.” When a user asks about project management tools, the model’s probabilities for generating “Asana” increase.

But frequency alone isn’t the only pattern that matters. Co-occurrence patterns matter too. If “Asana” frequently appears alongside “integration,” “collaboration,” and “automation,” the model learns those associations. If a user asks about a tool that helps with integrations, the model’s associations might make “Asana” more likely to appear.

This is why established brands have an advantage. They’ve been mentioned more often, in more contexts, and alongside more relevant terms. They have deeper pattern roots in the training data.

Smaller or newer brands face a different problem. If a brand appears only a handful of times in training data, or only in narrow contexts, the model won’t have learned strong associations with it. It becomes invisible.

2: Retrieval-Augmented Generation (RAG)

Not all LLMs rely only on training data. Many use RAG, which means they search for current information and incorporate it into their answer generation.

When a user asks a question, the RAG system might retrieve recent articles, product pages, social media posts, or other documents relevant to the query. These retrieved documents influence what the LLM generates.

Here’s where visibility becomes tied to retrievability. If your content isn’t easily retrievable through a RAG system’s search process, it might as well not exist. If your brand page uses jargon or terminology that doesn’t match the user’s query language, the retrieval system might skip it.

Consider a user asking, “What’s the best software for managing customer data?” A RAG system searches for documents about “customer data management,” “CRM,” “customer relationship management,” and similar terms. Your product page exists, but if it uses different terminology like “customer engagement platform,” it might not get retrieved. No retrieval means no inclusion, even if your product is actually relevant.

The freshness advantage of RAG is real, but it’s conditional. Older content that’s frequently referenced and retrieved still appears. New content that’s poorly discoverable remains invisible.

3: NLP and Context Understanding

LLMs don’t just match keywords. They understand semantic meaning and context.

If a user asks about “tools for remote teams,” an advanced LLM understands that this query relates to collaboration, asynchronous work, distributed communication, and coordination. It searches for brands and products that align with those concepts, not just pages that literally contain those words.

This is where entity clarity becomes important. An entity is how the model understands a thing to be. If your brand has a clear, consistent entity definition, the model can recognize it across contexts. If your brand is vague or poorly differentiated, the model struggles to connect it to relevant queries.

A brand that is consistently described as “project management software” has a clear entity. A brand that’s described as “productivity software,” “collaboration tool,” “task manager,” and “work OS” simultaneously has a muddled entity. The model struggles to know what the brand actually is.

Entity clarity also relates to semantic relationships. If an LLM understands that your brand is related to “remote work,” “asynchronous teams,” and “distributed companies,” it can surface your brand in responses about any of those topics. But this requires consistent messaging across multiple sources.

4: Authority and Cross-Source Validation

LLMs don’t trust a single source. They validate information across multiple sources.

When an LLM generates a claim, it’s drawing from patterns learned across many documents. If a fact or claim appears in 50 different, independent sources, the model considers it more reliable. If it appears in only 2 sources, the model might be more cautious about including it.

For brands, this means that mentions across multiple independent websites, publications, and platforms increase the likelihood of LLM mention. If your brand is mentioned only on your own website and one partner site, the model has limited external validation. If your brand is mentioned in industry publications, reviews, case studies, and competitor comparisons, the model treats it as more authoritative.

This is why PR, earned media, and third-party mentions matter more for LLM visibility than they do for traditional SEO. A mention in TechCrunch carries more weight in LLM systems than a mention on your own site, not just because it signals credibility, but because it’s an independent source. Multiple independent mentions create a pattern the model recognizes as significant.

5: Sentiment and RLHF Influence

LLMs are trained not just on data, but also on human feedback. This process is called Reinforcement Learning from Human Feedback (RLHF).

During RLHF, human raters evaluate LLM outputs. They score responses on accuracy, helpfulness, safety, and tone. The model learns not just what to say, but how to say it responsibly.

This influences which brands appear in answers. If training data includes negative sentiment about a brand, the model might be trained to deprioritize mentioning it. If a brand is described neutrally or positively across sources, the model is more likely to include it.

Safety considerations also play a role. If a brand has associations with misinformation, scams, or harmful content, the model might avoid mentioning it even if it appears frequently in training data. The RLHF process overrides pure pattern matching when necessary.

This means a brand with consistent, positive mentions across the web has a higher probability of appearing in LLM responses than a brand with controversial or negative associations.

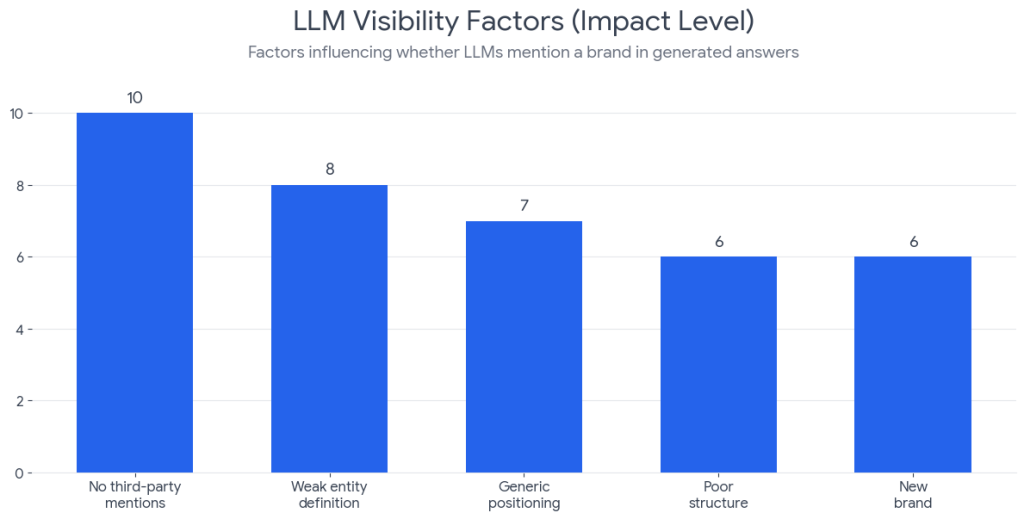

Why Some Brands Never Get Mentioned

Understanding the mechanisms above makes it clear why some brands remain invisible to LLMs.

Weak Entity Definition

If your brand’s purpose, positioning, and category are unclear, LLMs struggle to understand when to mention you. If you’re simultaneously a “CRM,” a “customer engagement platform,” and a “sales tool,” you lack a coherent entity. The model doesn’t know which queries to associate with you.

Lack of Third-Party Mentions

If your brand appears only on your own website, blog, and official channels, the model has limited external validation. It doesn’t recognize you as significant because you’re not being talked about independently. Brands that are discussed by journalists, reviewers, customers, and competitors have stronger presence in LLM patterns.

Generic Positioning

“We’re a productivity software that helps teams work together better.” That describes hundreds of products. An LLM reading this has no reason to mention your brand specifically. Generic positioning doesn’t create memorable, distinct patterns. Specific, defensible positioning does.

Poor Content Structure

If your content doesn’t clearly explain what you do, who you’re for, and why you’re different, RAG systems struggle to retrieve it. Semantic understanding helps, but clarity always wins.

Being New to the Market

If you launched last month, you’re not in training data. RAG can help surface your content, but you won’t benefit from the deep pattern associations that established brands have. Growth in LLM visibility takes time.

Ranking on Search Engines Doesn’t Guarantee AI Visibility

Finally, and crucially: ranking highly on Google doesn’t automatically make you visible to LLMs. These are separate systems with different requirements. You might rank first for your keyword while never being mentioned by LLMs, or vice versa.

How to Increase LLM Brand Mentions (Strategic, Not SEO Checklist)

The goal here isn’t to “optimize for LLMs” in the way you optimize for search engines. That’s impossible. Instead, the goal is to become a stronger pattern that LLMs recognize and reference.

1: Build a Clear Brand Entity

Define precisely what your brand is, what problem it solves, and who it’s for. This definition should be consistent across your website, content, and marketing. When LLMs encounter multiple sources describing your brand in the same way, they develop a clear entity understanding. That clarity makes you more likely to appear when relevant questions are asked.

2: Strengthen Presence Across the Web

Get your brand mentioned in places beyond your own properties. Pitch journalists, contribute to industry publications, participate in roundtables, and be quoted by analysts. When your brand appears in independent sources, the pattern becomes stronger. The model recognizes you across multiple contexts.

This isn’t about paying for mentions. It’s about becoming genuinely relevant to industry conversations. Earned mentions carry more weight in LLM systems than paid placement.

3: Create Answer-Ready Content

Write content that answers specific questions directly. If an LLM uses RAG and retrieves your content, that content should be clear, well-structured, and directly address the question being asked. Content that rambles, buries the answer in lengthy preambles, or requires significant reading to extract value is less likely to be incorporated into an LLM response.

4: Structure Content for Machine Understanding

Use clear headings, short paragraphs, and direct language. Include definitions of terms you use. Use schema markup where it applies. Make it easy for both humans and machines to understand what your content is about. This helps RAG systems retrieve it and LLMs understand its relevance.

5: Reinforce Brand and Topic Association

Consistently connect your brand to the specific topics, use cases, and problems you solve. If you’re a customer data platform, write about customer data challenges, customer data integration, customer data governance. Each piece of content reinforces the association between your brand and those concepts. Over time, LLMs develop stronger associations, making you more likely to appear in responses about those topics.

You Cannot Control LLM Mentions?

Before anyone launches a campaign claiming to “guarantee LLM visibility,” let’s be clear about what’s actually possible.

You cannot control whether an LLM mentions your brand. You cannot predict with certainty which queries will surface your brand. You cannot game LLM systems the way you can technically game search rankings (though that’s increasingly difficult too).

LLM outputs vary. The same question asked at different times might produce different answers. Sometimes your brand appears. Sometimes it doesn’t. There’s no position 1 in LLM answers. There’s only probability.

This is actually clarifying, because it means you should stop thinking about LLM mentions as something to “optimize” like a ranking position. Instead, think about building genuine authority and presence that makes mention more likely across many interactions.

The Shift from SEO to AI Presence

For two decades, digital marketing strategy centered on search engines. Get ranked, get traffic, grow. That framework is shifting.

SEO focuses on page rankings. AI systems focus on answer inclusion. These require different strategies.

SEO rewards narrow keyword focus, link authority, and ranking signals. AI visibility rewards clarity, broad topical authority, and consistent presence across the web.

SEO is about being first. AI presence is about being relevant, reliable, and recognizable.

This shift happens gradually. Search engines still drive traffic. But as AI answer engines become the primary interface for discovery, the rules change. Understanding AI visibility becomes essential to long-term visibility strategy.

Optimizing for AI overviews requires a different mindset. You’re not building pages for rankings. You’re building authority, clarity, and presence to influence how AI systems understand and represent your brand.

What Changes as Discovery Moves to AI Systems

The mechanism of discovery is fundamentally changing. Users increasingly ask AI systems questions and get answers directly, rather than clicking through to websites.

This reshapes competitive advantage. A brand that appears in AI overviews gets visibility without a click. But that visibility is based on different factors than traditional search visibility.

For businesses, this means strategy must adapt. Establishing strong GEO and topic authority becomes crucial as answer engines replace traditional search for many queries.

The brands that thrive in this transition won’t be those that tried to game one system. They’ll be the ones that built genuine authority, clear positioning, and consistent presence across multiple channels.

FAQs

What is an LLM brand mention?

An LLM brand mention is when an AI model includes your brand name in its generated response to a user’s question.

Do LLMs rank websites like Google?

No. LLMs do not rank websites. They generate responses based on patterns in training data and retrieved information.

Can you directly control LLM brand mentions?

No. You cannot directly control them. You can only influence the probability through authority, content, and brand presence.

What is the difference between AI mentions and AI citations?

AI mentions are when your brand name appears in an answer. AI citations are when the model references your content as a source.

Conclusion

LLMs don’t choose brands. They repeat patterns.

The patterns are built from training data, shaped by retrieval systems, influenced by human feedback, and refined by semantic understanding. Your goal is to become the strongest pattern in those systems.

You cannot directly optimize for LLM mentions. You cannot buy your way to guaranteed visibility. You cannot control the output.

What you can do is build a brand that deserves to be mentioned. Make that brand clear. Make it distinct. Build genuine authority. Earn mentions from independent sources. Create content that directly answers questions. Structure that content for understanding.

Do this consistently, across time and across platforms, and the probability that LLMs mention your brand increases.